Everyone watches NVIDIA. Meanwhile, a power transformer for a 2026 data center now takes 128 weeks to deliver — a 2.5-year wait for a part you cannot substitute. Half of US data centers planned for 2026 are already delayed or canceled. The real AI bottleneck is not the GPU. It is everything around the GPU.

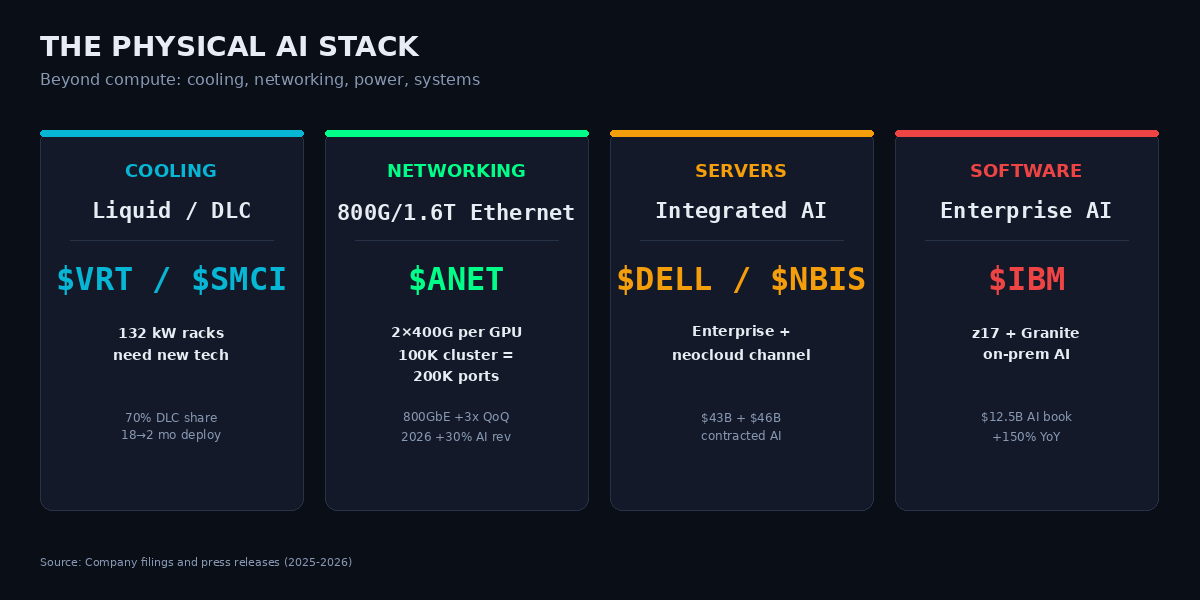

The physical stack has hit a wall, and the companies that make liquid cooling, AI-specific networking, integrated AI servers, and on-premise AI software are capturing the margin that NVIDIA cannot. Six of those companies live in the AICcelerate engine universe, each holding a distinct layer of the stack.

Why Now

Four simultaneous forces collided in Q1 2026:

- Hyperscaler 2026 capex committed: $660-690 billion — approximately 75% ($450B+) flowing directly into AI infrastructure. Nearly double 2025.

- GPU rack density jumped from 15 kW (2020) to 132 kW (GB200 NVL72 in 2025), and the 2026 Blackwell Ultra / Vera Rubin roadmap targets 240 kW+ per rack. Air cooling is physically impossible above ~50 kW: at 100 kW you would need to move 35,000 CFM of air per rack. Liquid cooling, AI-specific networking, power distribution, and prefab modular construction are no longer optional.

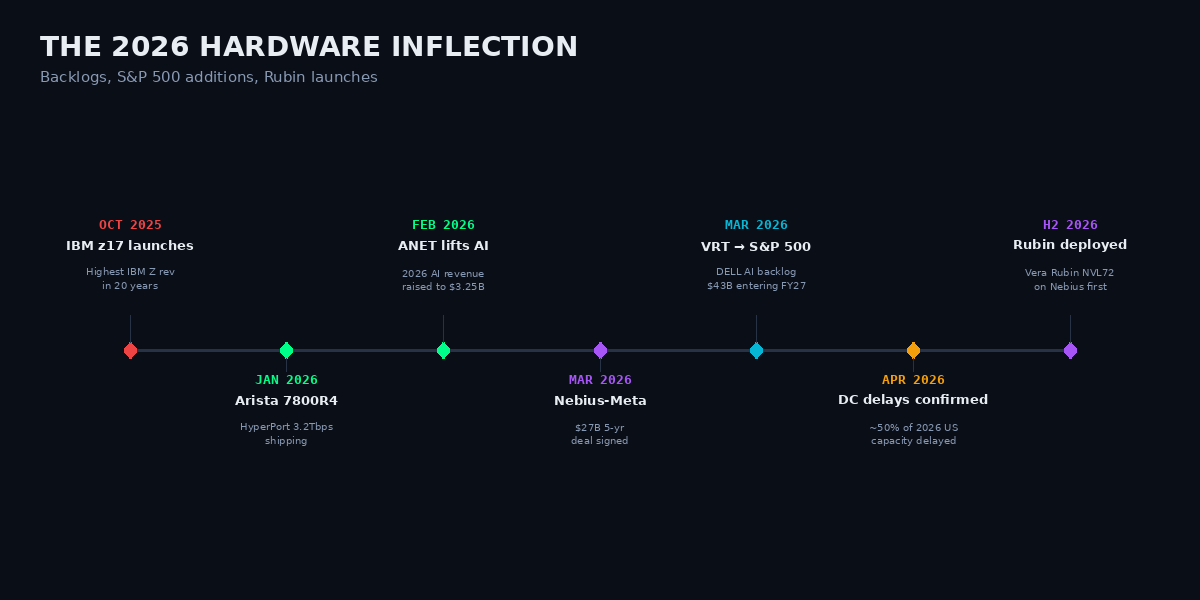

- Vertiv was added to the S&P 500 in March 2026 with $9.5-15B of backlog and order growth of +252% in Q4. Dell's AI-server backlog hit $43B entering FY27. Nebius signed a $27B five-year deal with Meta.

- Nearly 50% of 2026 US data-center capacity has been delayed or canceled, per reporting confirmed in April 2026 — mostly due to transformer, switchgear, and electrical-equipment shortages. The bottleneck is real and measurable.

When backlogs, cooling density, and physical shortages all inflect inside a single quarter, the winners of the next 18 months are the companies that own each physical-layer constraint. NVIDIA still captures the GPU rent. These six capture the surrounding rent that scales linearly per GPU.

Figure 1 — 2026 Hardware Inflection

The Physical Stack: Six Names

Figure 2 — The Physical AI Stack

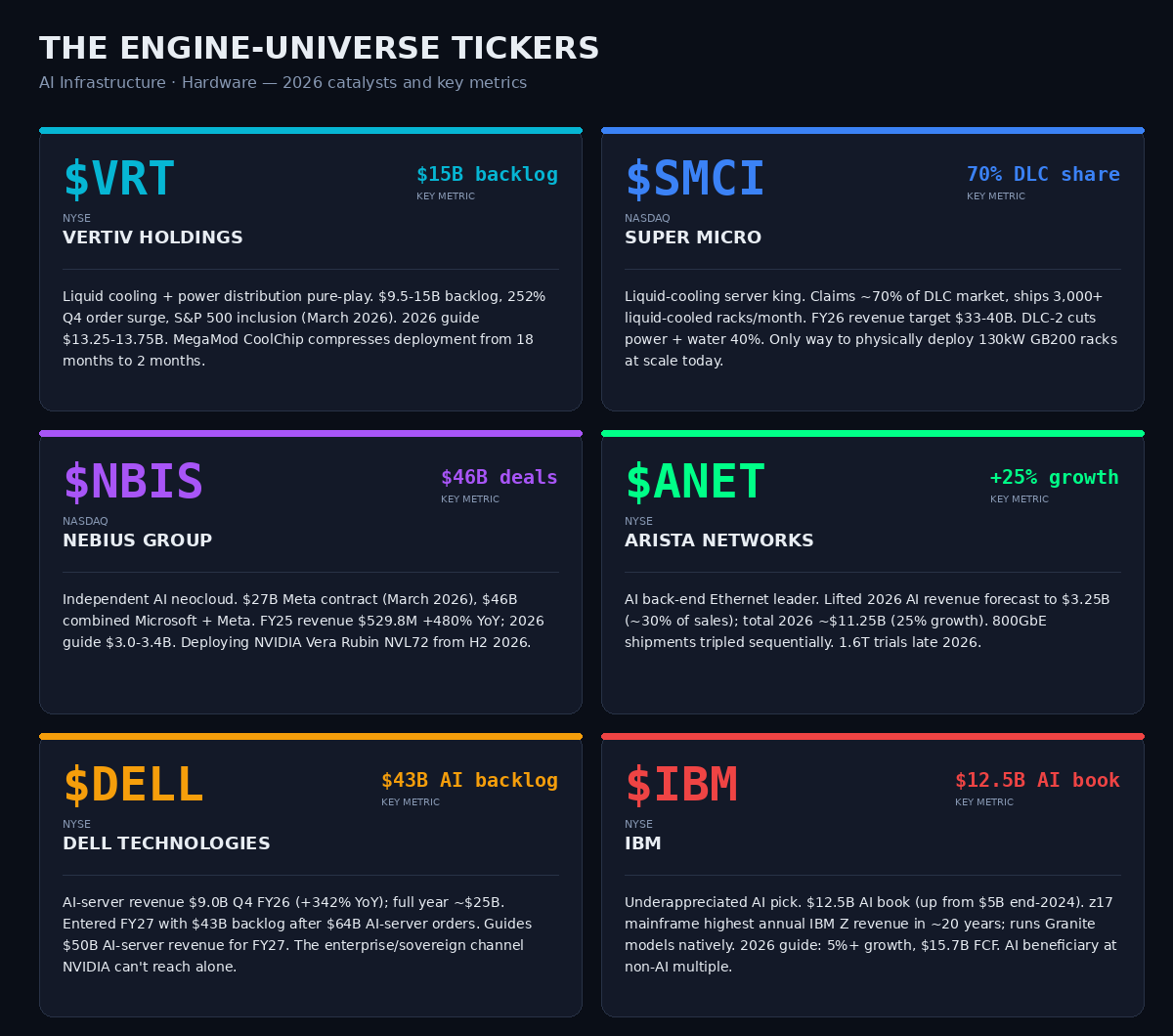

$VRT — Vertiv Holdings (NYSE) · Liquid Cooling + Power Distribution

The liquid-cooling and power-distribution pure-play. Backlog $9.5-15B, 252% Q4 order surge, S&P 500 inclusion in March 2026. Management guides $13.25-13.75B of revenue for 2026, with system-level AI orders (cooling plus power as an integrated solution) now the dominant revenue mix. The MegaMod CoolChip prefab module compresses deployment from 18 months to roughly 2 months — the single most valuable time-compression in the entire data-center build.

$SMCI — Super Micro Computer (NASDAQ) · Liquid-Cooled Servers

The liquid-cooling server king. Claims approximately 70% of the direct-liquid-cooling (DLC) market and can ship 3,000+ liquid-cooled racks per month. FY26 revenue target $33-40B. The new DLC-2 system cuts power and water consumption by up to 40%. When NVIDIA ships a 130 kW GB200 rack, SMCI is the only way to physically deploy it at scale today. Margins have compressed to ~13.5% — but the volume story is still intact.

$NBIS — Nebius Group (NASDAQ) · Independent AI Neocloud

The independent AI neocloud with a $27B five-year Meta contract signed March 2026, plus $46B in combined Microsoft + Meta deals. Full-year 2025 revenue $529.8M (grew ~480% YoY), 2026 guide $3.0-3.4B. Offers GB200/B200/B300/H200 today and will deploy NVIDIA Vera Rubin NVL72 from H2 2026. For investors who want AI capacity exposure without single-hyperscaler concentration, $NBIS is the picks-and-shovels exit.

$ANET — Arista Networks (NYSE) · AI Ethernet

The AI back-end Ethernet leader. Arista lifted its 2026 AI revenue forecast to $3.25B (~30% of sales); total 2026 revenue tracking to ~$11.25B (25% growth). Branded 800GbE share soared as shipments more than tripled sequentially in Q2 2025. The 7800R4 with HyperPort 3.2Tbps Ethernet began shipping Q1 2026; 1.6T trials are scheduled late 2026. Each Blackwell GPU ships with 2 × 400 GbE ports — networking scales linearly with every GPU added.

$DELL — Dell Technologies (NYSE) · Integrated AI Servers

AI-server revenue was $9.0B in Q4 FY26 (+342% YoY); full year approximately $25B. Dell entered FY27 with $43B of backlog after $64B in AI-server orders. FY27 guide: $50B in AI-server revenue. Dell is the enterprise, sovereign, and neocloud channel that NVIDIA cannot reach alone — the one vendor that integrates servers, storage, networking, and services at tier-1 scale.

$IBM — IBM (NYSE) · Enterprise AI Software

The underappreciated AI pick. $12.5B AI book of business, up from $5B at end-2024. The z17 mainframe posted the highest annual IBM Z revenue in ~20 years and runs Granite models natively, so regulated enterprises can keep AI workloads on-prem. Software revenue +14%, watsonx plus Red Hat accelerating. 2026 guide: 5%+ constant-currency growth, $15.7B free cash flow. A rare AI beneficiary trading at a non-AI multiple.

Figure 3 — Engine Universe Exposure

The Non-Obvious Insight

Networking scales linearly with GPU count — and nobody is pricing it in. Every NVIDIA Blackwell GPU ships with two 400 Gb/s Ethernet ports (800 Gb/s per GPU) for scale-out. A 100,000-GPU cluster needs 200,000 × 400G ports, plus spine switches, plus optics. That is why $ANET raised its 2026 AI revenue forecast by 30% — the network is not a one-time cost but a per-GPU recurring tax. 800GbE shipments tripled sequentially in one quarter. Meanwhile the transformer bottleneck is worse than the GPU shortage: the US makes only ~20% of its own large power transformers; China controls ~60% of global capacity. Only roughly one-third of the 12-16 GW of 2026 data-center capacity is actually under construction — the rest is waiting for electrical equipment that takes 2.5-5 years to ship.

Figure 4 — Rack Density: From Air Cooling to Full Immersion

Risks & Disconfirming Evidence

- Hyperscaler insourcing. Meta, Google, and Amazon all design custom silicon, and increasingly custom networking. $ANET customer concentration is the loudest warning. Dell's channel advantage can erode if hyperscalers go direct.

- Chinese supply dependency. 60% of global power-transformer capacity sits in China. Any trade escalation lengthens the bottleneck. DLC and 800GbE will attract every contract manufacturer in Taiwan; margin pressure follows volume pressure.

- AI capex air-pocket. If OpenAI and Anthropic monetization disappoints, the $690B capex cycle normalises quickly and unwinds backlogs. $SMCI margins already compressed to ~13.5%. A GPU-architecture pivot from Blackwell to Rubin could favour different OEMs — DELL/SMCI share shift risk is real.

Engine Signal Context

The AICcelerate engine universe contains all six named tickers — $VRT, $SMCI, $NBIS, $ANET, $DELL, $IBM — alongside 173 other US equities. The engine runs signal detection on each independently of the macro narrative.